Fitting an underlying body model to 3D clothed human assets has been extensively studied, yet most

approaches focus on either single-modal inputs such as point clouds or multi-view images alone, often

requiring known metric scale, a constraint that is frequently unavailable, particularly for AIGC-generated

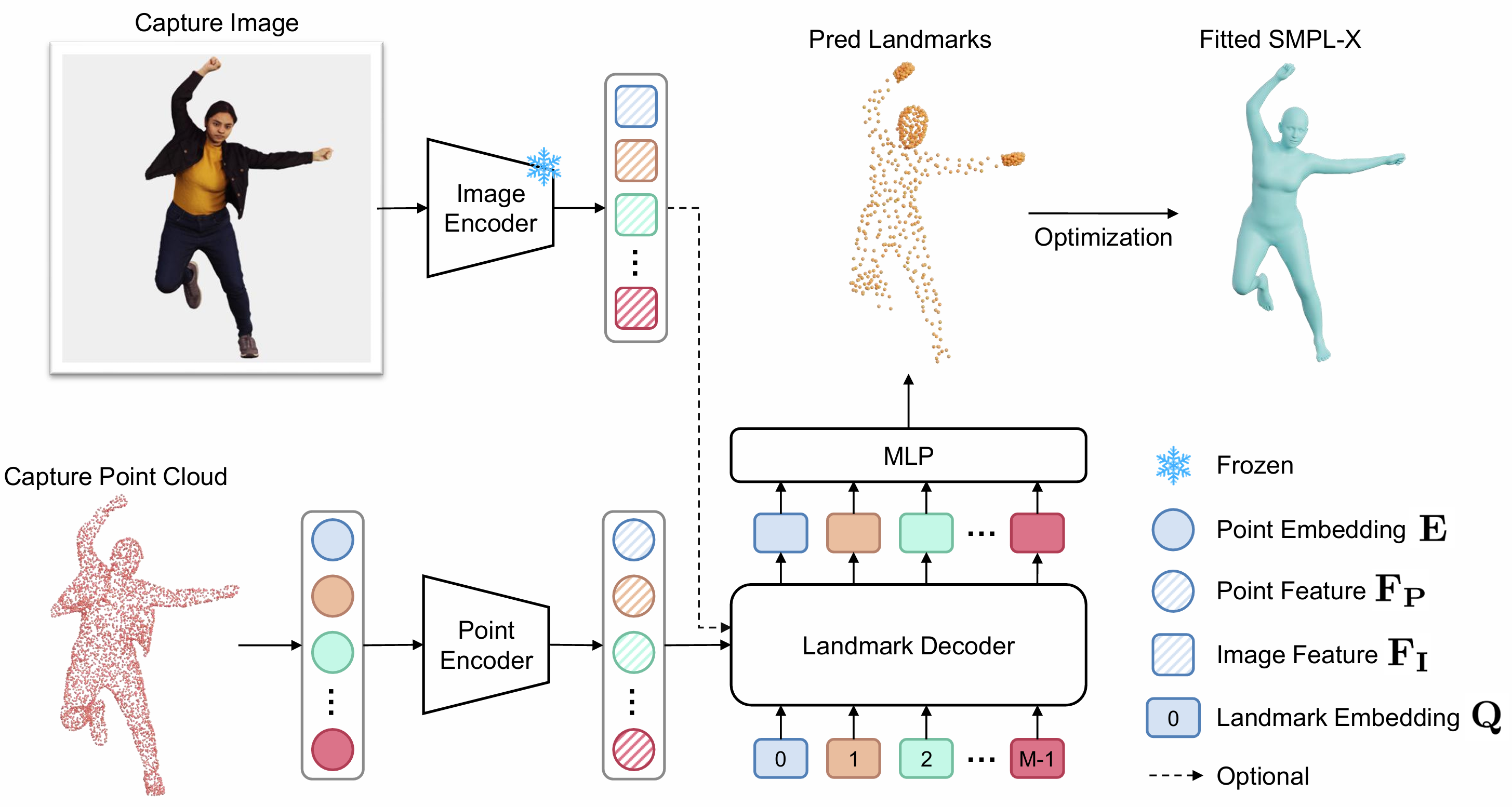

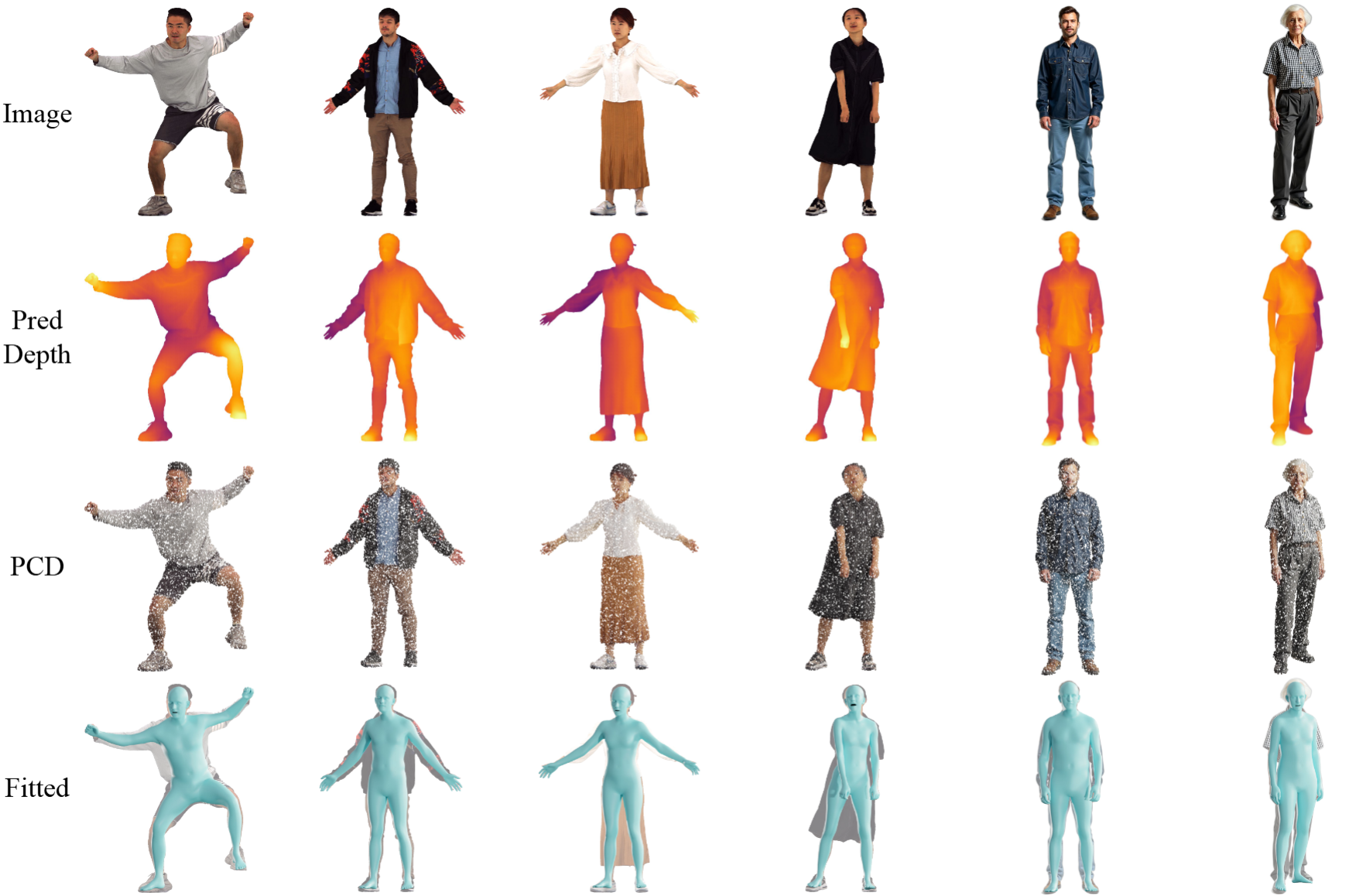

assets where scale distortion is prevalent. We propose OmniFit, where "Omni" signifies our

method's ability to seamlessly handle diverse multi-modal inputs such as full scans, partial depth, and image

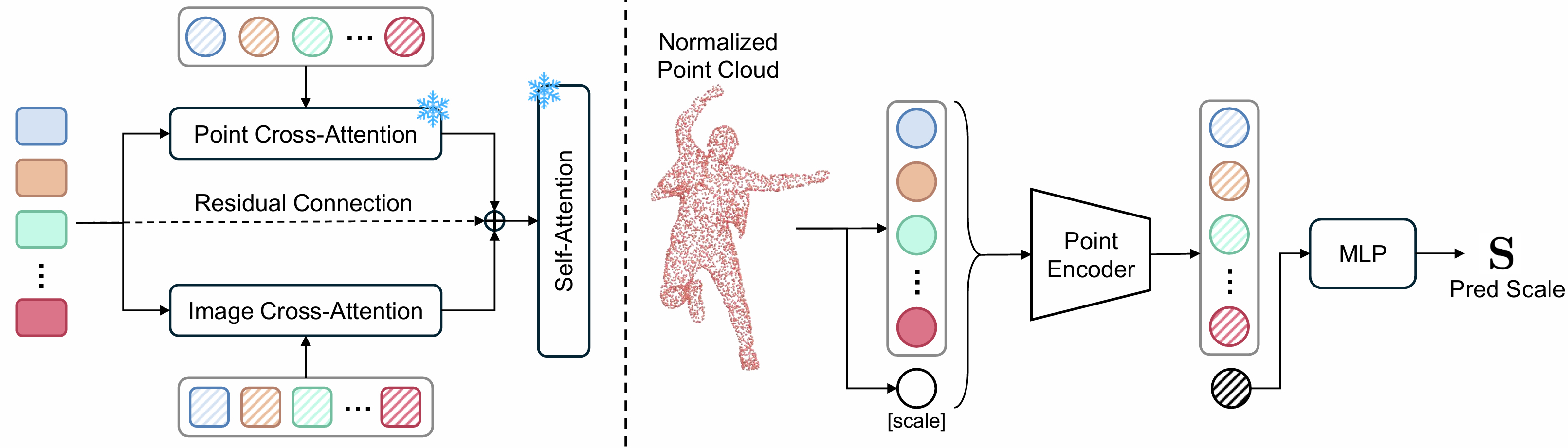

captures, while remaining scale-agnostic across both real and synthetic assets. Our key innovation is a simple

yet effective conditional transformer decoder that directly transforms surface points into dense body landmarks,

which are subsequently used for SMPL-X parameter fitting. Additionally, an optional plug-and-play image adapter

enriches geometric details with visual cues to address potential incompleteness. We further introduce a dedicated

scale predictor to resize subjects into canonical proportions. Remarkably, OmniFit substantially

outperforms state-of-the-art methods by 57.1%-70.9% across daily and loose

clothing scenarios, making it the first body fitting method to surpass multi-view optimization baselines and the

first to achieve millimeter-level accuracy on CAPE and 4D-DRESS benchmarks.

mniFit

mniFit